Question: What can survey research tell us about church attendance?

Social scientists have been tracking self-reported religious service attendance for over seventy years. At present, over 40% of adult Americans claim to attend church (or other religious services) nearly every week. That figure is 25% lower than at its peak in the 1950s, but it has remained remarkably stable for the past four decades, declining at most by couple of percentage points. That decline should not be taken as evidence for a pattern of long-term secular decline, because the attendance figure was lower during the great depression than it is now. Self-reported attendance is one of the bits of evidence that factors into the widely cited observation that the United States is the mostly religiously observant modern society in the world.

We know two things about these self-reports. First, they are exaggerated, by as much as fifty percent. In other words, if 39% of respondents say that they were in church last weekend, we can only be confident that 26% actually were. Although many observers long suspected that the self-reports were out of line with reality, we owe the demonstration of the extent of the exaggeration to a sociological study in which the authors actually counted attendance at churches in an Ohio county and compared the result with the survey self-reports from the same area. That study, by Kirk Hadaway, Penny Long Marler and Mark Chaves and published in 1993, has since spawned an enormous and technically sophisticated literature.

The other thing we know about these self-reports is that they are meaningful in spite of the tendency to exaggerate. In other words, when people report their religious service attendance, they are giving us valid information about themselves even when they exaggerate how often they worship. Decades of work have shown that what sociologists call “attendance” (i.e., self-reported attendance) is a powerful predictor of all sorts of other behavior and attitudes, and self-reported attendance tracks with what we know from other sources about religious observance. Older Americans are more likely to attend than those younger, women more than men, the married more than the single, African Americans more than whites, conservative Protestants more than “Mainline” Protestants, those living in the South and Midwest more than those in the East and West. A recent study by Philip Brenner argues convincingly that the kind of people who say they’re in church every week are similar to those who, based on their time diaries, are in fact there every week. Their religion is important to their identity. Over-reports are not capricious statistical noise.

Is the tendency of survey respondents to exaggerate their own religious attendance a new development? Some observers suggest that a huge decline in actual attendance since the 1950s has been masked by a persistent, and necessarily intensifying, tendency to inflate self-reports, such that the relatively level post-1960s self-reported attendance track, increasingly out of line with a falling curve, is puffed up by a tendency to over-report that precisely compensates for the real decline. I am not aware of any rigorous attempt to test that supposition, but I draw the conclusion, from a relatively recent stand in the literature, that it is mistaken.

A few social scientists have recently taken advantage of the fact that many congregations have been systematically recording actual attendance for many years. That makes it possible to track real attendance over time. In my own study of Mendocino Presbyterian Church, I used 23 years of attendance records to show that attendance was “flat” from 1959 to 1973, averaging about 80, when it shot up with the call of a new pastor, more than doubling in the three years from 1973 to 1976. Economist Laurence Iannaccone and sociologist Sean Everton examine week-to-week fluctuation in attendance from the 1990s into the early 2000s in four west coast congregations (RCA, UCC and 2 American Baptist). Sociologist Paul Olson analyzed weekly attendance in 71 congregations in a midwestern city for the year 2004. Olson and economist David Beckworth compare attendance figures from two midwestern congregations (Episcopal and Lutheran) from the 1940s to the 2000s, focusing on seasonal variation.

It is possible to draw several tentative conclusions from these studies. First of all, as pastors know, week-to-week fluctuation in attendance is endemic. The data plots in the studies I’ve mentioned would be unreadable if the authors had not smoothed wild week-to-week swings by calculating monthly moving averages. When I was new to on-the-ground research in sociology of religion, I was inclined to see significance in such fluctuations, but I was fortunate that the pastor of the church I was studying (in Mendocino) was wise as well as popular. He told me he never took it personally (or to be of religious significance) when someone happened to be missing on a given Sunday. (I have since applied that wisdom to attendance patterns in my classrooms.) To be more precise, the factors that cause someone not to show up in church (or class) on a given day are so numerous, and so much beyond the control of the church (or the college), as to be insignificant from the point of view of the health of the respective institution: bad weather, a sick child, an out-of-town funeral, a flat tire, an accident on the freeway — the list is endless.

Second, some fluctuation is quite patterned. Attendance on the Sunday after Easter is not only a let-down from Easter itself, but is often below the regular Sunday average. The same is true for the Sunday after Christmas. More people typically show up on Mother’s Day than on Father’s Day. But especially, attendance varies by the season of the year. It’s up in the fall through Advent, trends down after Easter and drops significantly in the summer. (The paper by Iannaccone and Everton is allusively titled “Never on Sunny Days.”) Olson and Beckwith’s analysis of seven decades of attendance figures for “Trinity Episcopal” and “Good Shepherd Lutheran” show that seasonal variation is nothing new (after all, Sunday school has taken a seasonal holiday for many decades).

Another trend — one that Olson and Beckwith did not expect to find — sheds additional light on attendance. Despite the difference between their two congregations — a liberal Episcopal church experiencing long-term decline and a larger Lutheran church whose attendance jumped upward in the 1990s when it became more conservative — the seasonal variation in attendance that both displayed became less pronounced in the 1990s and 2000s. The peaks were lower and the valleys higher. The authors argue that what is happening in both churches is that both have fewer half-hearted attenders — those who go to worship primarily because that’s the behavior expected of respectable people — and a proportionately greater committed core.

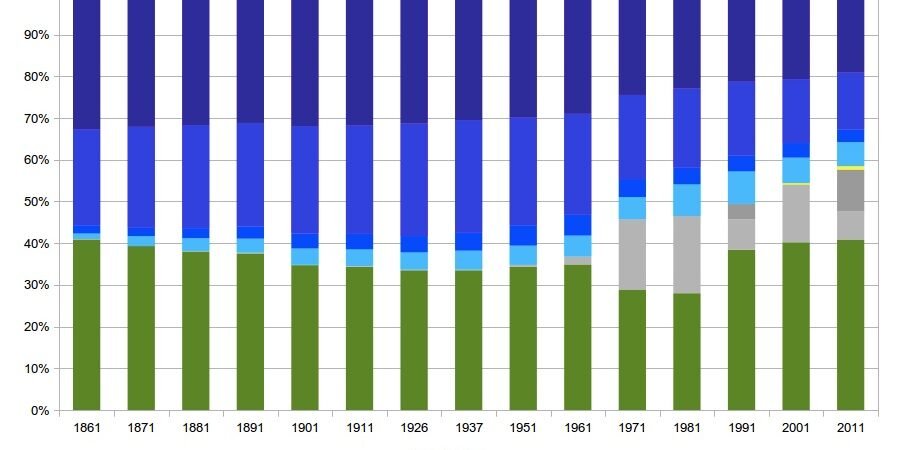

Olson and Beckwith have in mind another finding of American religious statistics, namely the huge rise of religious “nones” since about 1990. In the 1950s, those who embraced no religion were a tiny minority, fewer than five percent. But “nones” today are approaching twenty percent of the population. More Americans have given up on religion. They do not feel obligated to attend church; correlatively, they have no need to pretend that they were in church when they were not. To that extent, reporting religious activity is less subject to what sociologists call social desirability bias.

Another pattern documented in the attendance-counts literature helps explain declining attendance even among the “committed core” for whom attendance is personally meaningful. Iannaccone and Everton’s study of four west coast churches and Olson’s of 71 midwestern churches show drops in attendance on the Sundays of Memorial Day, July 4 and Labor Day in the 1990s and 2000s . I do not know if the summer holiday dip was characteristic of the 1940s and ‘50s, but Iannaccone and Everton’s argument, and my own knowledge of social history, suggests that it may be a relatively recent pattern. In 1971, Congress made the last Sunday of May the date of observance of Memorial Day, in place of the original May 30. That gave the summer season a notable three-day holiday on each end. I am under the impression that many employers now make it possible for the July 4 holiday also to be part of a three-day weekend. Those increments to American’s stock of leisure time arguably combined with greater affluence to create more opportunities for family outings (and missed church services) than likely pertained in the 1940s and ‘50s. My pastor, Frank Senn, and I can reel off the names of several genuinely devoted families in our church, including our own, who fit this pattern.

Iannaccone and Everton have a more general point to make. Increasing leisure time and disposable income (since the 1950s, if not in the past decade) are among the factors giving families more options that make attending church a potentially more costly choice for a Sunday activity. Another factor, surely correlated with the rise of “nones,” is the decreasing deference shown to the sacredness of Sunday on the part of schools and other institutions of American civil society. High school athletic competitions (in which many of the youth of our church excel) make it very hard for many families to come to worship every week, even those families who must be counted among the most devoted to the church. Church attendance is increasingly in competition with other options.

The persons Frank Senn and I have in mind are no doubt among those who would have a good reason to overreport their church attendance (as “once a week or more”) if they were to be approached by a survey researcher. They would do this not to prove to the interviewer that they are respectable citizens, but to communicate a valued truth about themselves, that churchgoing is part of who they are. Thus if the social desirability bias that may have inflated self-reported attendance in the past has lost much of its causal force when religion enjoys lower public esteem, another systematic source of bias — the personal meaning attached to regular attendance — may have replaced it, as Brenner and Olson and Beckwith suggest. So we can expect that self-reported attendance will continue to be inflated, but there is no evidence that it was less inflated in the past than it is today.